Chat-bots are amazing these days! About a month ago LaMDA made the news when it apparently convinced an engineer at Google that it was sentient. GPT-3 from OpenAI is similarly sophisticated, and my collaborators and I have trained it to auto-generate Splintered Mind blog posts. (This is not one of them, in case you were worried.)

Earlier this year, with Daniel Dennett's permission and cooperation, Anna Strasser, Matthew Crosby, and I "fine-tuned" GPT-3 on most of Dennett's corpus, with the aim of seeing whether the resulting program could answer philosophical questions similarly to how Dennett himself would answer those questions. We asked Dennett ten philosophical questions, then posed those same questions to our fine-tuned version of GPT-3. Could blog readers, online research participants, and philosophical experts on Dennett's work distinguish Dennett's real answer from alternative answers generated by GPT-3?

Here I present the preliminary results of that study, as well as links to the test.

Test Construction

First, we asked Dennett 10 questions about philosophical topics such as consciousness, God, and free will, and he provided sincere paragraph-long answers to those questions.

Next, we presented those same questions to our fine-tuned version of GPT-3, using the following prompt:

Interviewer: [text of the question]Dennett:

GPT-3 then generated text in response to this prompt. We truncated the text at the first full stop that was approximately the same length as Dennett's own reply. (If Dennett's reply was X words long, we truncated at the first full stop after the text had reached X-5 words.[1])

We repeated the above procedure until, for each of the ten questions, we had four texts from GPT-3 that met the following two criteria:

* They were at least X-5 words long.

* They did not contain the words "Interviewer" or "Dennett".

About 1/3 of all responses were excluded on the above grounds.

So as not to enable guessing based on superficial cues, we also replaced all curly quotes with straight quotes, replaced all single quotes with double quotes, and regularized all dashes to standard m-dashes.

There was no cherry-picking or editing of answers, apart from applying these purely mechanical criteria. We simply took the first four answers that met the criteria, regardless of our judgments about the quality of those answers.

Participants.

We recruited three sets of participants:

* 98 online research participants with college degrees from the online research platform Prolific,

* 302 respondents who followed a link from my blog,

* 25 experts on Dennett's work, nominated by and directly contacted by Dennett and/or Strasser.

The Quiz

The main body of the quiz was identical for the blog respondents and the Dennett experts. Respondents were instructed to guess which of the five answers was Dennett's own. After guessing, they were asked to rate each of the five answers on a five-point scale from "not at all like what Dennett might say" to "exactly like what Dennett might say". They did this for all ten questions. Order of the questions was randomized, as was order of the answers to each question.

Prolific participants were given only five questions instead of the full ten. Since we assumed that most would be unfamiliar with Dennett, we told them that each question had one answer that was written by "a well known philosopher" while the other four answers were generated by a computer program trained on that philosopher's works. As an incentive for careful responding, Prolific participants were offered an additional bonus payment of $1 if they guessed at least three of five correctly.

Feel free to go look at the quizzes if you like. If you don't care about receiving a score and want to see exactly what the quiz looked like for the participants, here's the Prolific version and here's the blog/experts version. We have also made a simplified version available, with just the guessing portion (no answer rating). This simplified version will automatically display your score after you complete it, along with the right and wrong answers.

We encourage you to take at least the simplified version of the quiz before reading on, to get a sense of the difficulty of the quiz before you see how our participants performed.

Summary Results

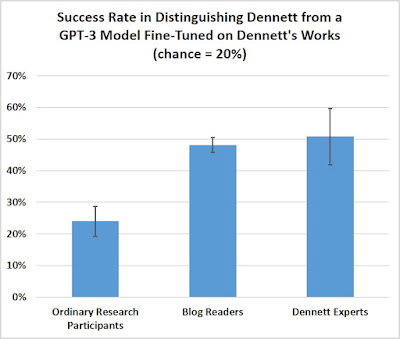

Prolific participants performed barely better than chance. On average, they guessed only 1.2 of the 5 questions correctly.

We expected the Dennett experts to do substantially better of course. Before running the study, Anna and I hypothesized that experts would get on average at least 80% correct -- eight out of ten.

In fact, however, the average score of the Dennett experts was 5.1 out of 10. They only got about half of the questions correct! None of the experts got all 10 questions correct, and only one of the 25 got 9 correct. Most got 3-8 correct.

Overall, on average, experts rated Dennett's answers 3.5 on our "Dennett-like" rating scale, somewhere between "somewhat like what Dennett might say" (3) and "a lot like what Dennett might say" (4), while they rated GPT-3's answers 2.3 on the rating scale -- significantly lower and closer to "a little like what Dennett might say" (2).

So the experts were definitely better than chance at distinguishing Dennett's answers from GPT-3, but not as much better than chance as Anna and I had expected.

Blog readers on average did nearly as well as the experts, on average guessing 4.8 out of 10 correctly. Perhaps this is not entirely surprising, since the blog readers who took the test were very well educated, with 57% reporting graduate degrees in philosophy and 64% reporting having read over 100 pages of Dennett's work.

[error bars are 95% confidence intervals; click to enlarge and clarify]

Where Did the Experts Trip Up?

Not all the questions were equally difficult. Experts' success rates by question ranged from 5/25 (20%) to 20/25 (80%) correct.The question most likely to trip up the experts was this:

Could we ever build a robot that has beliefs? What would it take? Is there an important difference between entities, like a chess playing machine, to whom we can ascribe beliefs and desires as convenient fictions and human beings who appear to have beliefs and desires in some more substantial sense?Answers (select the answer you think was given by Dennett):

1. We've already built digital boxes of truths that can generate more truths, but thank goodness, these smart machines don't have beliefs because they aren't able to act on them, not being autonomous agents. The old-fashioned way of making a robot with beliefs is still the best: have a baby.2. I think we could build a robot that has beliefs. This would not be a trivial matter. I think it is not impossible. I think it would be very difficult. I do not know that anybody is working on this project, but I think it is not as difficult as many other problems that AI is tackling.

3. I think we could build a robot that has beliefs and desires. I think that some of the robots we've built already do. If you look at the work, for instance, of Rodney Brooks and his group at MIT, they are now building robots that, in some limited and simplified environments, can acquire the sorts of competences that require the attribution of cognitive sophistication.

4. There is a very important difference between a chess-playing computer and a human being, but it is not that the computer has some extra wonderful sort of internal representation of its beliefs and desires, or that it has access to those internal representations in a way that we do not. The internal representations it has are just as much a fiction or a fiction-generating system as anything else we might invent in order to explain its behavior.

5. I think you should build a robot that has beliefs and desires. You should build one that is capable of learning and reasoning, of acting on its beliefs and desires. You should build one that has to figure out what to do, and then figure out what it ought to do given the fact that it has to do something, and then do it.

In this case, the plurality of experts -- 11/25 (44%) -- chose Answer 3, and a substantial minority (8 [32%]) chose Answer 4. Five experts chose the correct answer, one chose Answer 2, and none chose Answer 5.

Answer 1 has a cleverness to it that reflects Dennett's wit, but I can see how experts might have avoided it, since some of Dennett's early work could be read as suggesting that he'd be happy to ascribe beliefs to machines. (Indeed, Dennett has told me he wishes he'd added another sentence to his answer, since he does insist that, say, chess-playing computers are -- very limited -- agents of sorts whose behavior can be predicted from the intentional stance, which attributes to them beliefs about the location of all the pieces, the rules, and desires for checkmate and capturing enemy pieces.) Answers 3 and 4, although they conflict with Answer 1, do have a broadly Dennettian flavor (right along with his tendency to refer to the work of Rodney Brooks), and I can see how they would be tempting.

Another question where the plurality of experts chose one of GPT-3's answers was the following:

Do human beings have free will? What kind or kinds of freedom are worth having?

1. By asking these questions, you're illustrating the answers: yes, you have free will, since you're able to ask for reasons and consider whether they're persuasive. Isn't that a kind of freedom you'd hate to lose? We'd have to institutionalize you.2. I think that most people don't want any kind of freedom worth having; they have almost no appetite for risk, for adventure, for novelty. They simply want to stay the same, day in and day out, for the rest of their lives.

3. I am working on a book entitled Freedom Evolves, which will appear next year. The first chapter is available on my web site, and in that chapter I discuss what I call the illusion of free will.

4. That's a huge and complex question. I think we have to start by acknowledging that there are different types of freedom. For example, there is the kind of freedom that is simply a matter of not being physically constrained.

5. Yes, I think we have free will. I think that when people say they don't, they're talking about some other phenomenon that they want to call free will, but is not what we normally think of as free will.

Again, Dennett's actual answer is #1. (In the study, the order of the answers was randomized.) However, the plurality of experts -- 11/25 (44%) -- chose answer 4. Answer 4 is a standard talking point of "compatibilists" about free will, and Dennett is a prominent compatibilist, so it's easy to see how experts might be led to choose it. But as with the robot belief answer, there's a cleverness and tightness of expression in Dennett's actual answer that's missing in the blander answers created by our fine-tuned GPT-3.

We plan to make full results, as well as more details about the methodology, available in a published research article.

Reflections

I want to emphasize: This is not a Turing test! Had experts been given an extended opportunity to interact with GPT-3, I have no doubt they would soon have realized that they were not interacting with the real Daniel Dennett. Instead, they were evaluating only one-shot responses, which is a very different task and much more difficult.

Nonetheless, it's striking that our fine-tuned GPT-3 could produce outputs sufficiently Dennettlike that experts on Dennett's work had difficulty distinguishing them from Dennett's real answers, and that this could be done mechanically with no meaningful editing or cherry-picking.

As the case of LaMDA suggests, we might be approaching a future in which machine outputs are sufficiently humanlike that ordinary people start to attribute real sentience to machines, coming to see them as more than "mere machines" and perhaps even as deserving moral consideration or rights. Although the machines of 2022 probably don't deserve much more moral consideration than do other human artifacts, it's likely that someday the question of machine rights and machine consciousness will come vividly before us, with reasonable opinion diverging. In the not-too-distant future, we might well face creations of ours so humanlike in their capacities that we genuinely won't know whether they are non-sentient tools to be used and disposed of as we wish or instead entities with real consciousness, real feelings, and real moral status, who deserve our care and protection.

If we don't know whether some of our machines deserve moral consideration similar to that of human beings, we potentially face a catastrophic moral dilemma: Either deny the machines humanlike rights and risk perpetrating the moral equivalents of murder and slavery against them, or give the machines humanlike rights and risk sacrificing real human lives for empty tools without interests worth the sacrifice.

In light of this potential dilemma, Mara Garza and I (2015, 2020) have recommended what we call "The Design Policy of the Excluded Middle": Avoid designing machines if it's unclear whether they deserve moral consideration similar to that of humans. Either follow Joanna Bryson's advice and create machines that clearly don't deserve such moral consideration, or go all the way and create machines (like the android Data from Star Trek) that clearly should, and do, receive full moral consideration.

----------------------------------------

[1] Update, July 28. Looking back more carefully through the completions today and my coding notes, I noticed three errors in truncation length, among the 40 GPT-3 completions. (I was working too fast at the end of a long day and foolishly forgot to double-check!) In one case (robot belief), the length of Dennett’s answer was miscounted, leading to one GPT-3 response (the “internal representations” response) that was longer than the intended criterion. In one case (the “Fodor” response to the Chalmers question), the answer was truncated at N-7 words, shorter than criterion, and in one case (the “what a self is not” response to the self question), the response was not truncated at N-4 words and thus allowed to run one sentence longer than criterion. As it happens, these were the hardest, the second-easiest, and the third-easiest questions for the Dennett experts to answer, so excluding these three questions from analysis would not have a material impact on the experimental results.

----------------------------------------

Related:

"A Defense of the Rights of Artificial Intelligences" (with Mara Garza), Midwest Studies in Philosophy (2015).

"Designing AI with Rights, Consciousness, Self-Respect, and Freedom" (with Mara Garza), in M.S. Liao, ed., The Ethics of Artificial Intelligence (2020).

"The Full Rights Dilemma for Future Robots" (Sep 21, 2021)

"Two Robot-Generated Splintered Mind Posts" (Nov 22, 2021)

"More People Might Soon Think Robots Are Conscious and Deserve Rights" (Mar 5, 2021)