Imagine, if you can, an accurate moralometer -- an inexpensive device you could point at someone to get an accurate reading of their overall moral goodness or badness. Point it at Hitler and see it plunge down into the deep red of evil. Point it at your favorite saint and see it rise up to the bright green of near perfection. Would this be a good thing or a bad thing to have?

Now maybe you can't imagine an accurate moralometer. Maybe it's just too far from being scientifically feasible -- more on this in an upcoming post. Or maybe, more fundamentally, morality just isn't the kind of thing can can be reduced to scalar values of say +0.3 on a spectrum from -1 to +1. Probably that issue deserves a post also. But let's set qualms aside for the sake of this thought experiment. $49.95 buys you a radar-gun-like device that instantly measures the overall moral goodness of anyone you point it at, guaranteed.

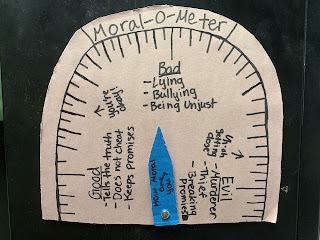

[a "moralometer" given to me for my birthday a couple of years ago by my then thirteen-year-old daughter]

Imagine the scientific uses!

Suppose we're interested in moral education: What interventions actually improve the moral character of the people they target? K-12 "moral education" programs? Reading the Bible? Volunteering at a soup kitchen? Studying moral philosophy? Buddhist meditation? Vividly imagining yourself in others' shoes? Strengthening one's social networks? Instantly, our moralometer gives us the perfect dependent measure. We can look at both the short-term and long-term effects of various interventions. Effective ones can be discovered and fine-tuned, ineffective ones unmasked and discarded.

Or suppose we're interested in whether morally good people tend to be happier than others. Simply look for correlations between our best measures of happiness and the outputs of our moralometer. We can investigate causal relationships too: Conduct a randomized controlled study of interventions on moral character (by one of the methods discovered to be effective), and see if the moral treatment group ends up happier than the controls.

Or suppose we're interested in seeing whether morally good people make better business leaders, or better kindergarten teachers, or better Starbucks cashiers, or better civil engineers. Simply look for correlations between moralometer outputs and performance measures. Voila!

You might even wonder how could we even pretend to study morality without some sort of moralometer, of at least a crude sort. Wouldn't that be like trying to study temperature without a thermometer? It's hard to see how one could make any but the crudest progress. (In a later post, I'll argue that this is in fact our situation.)

Imagine, too, the practical uses!

Hiring a new faculty member in your department? Take a moralometer reading beforehand, to ensure you aren't hiring a monster. Thinking about who to support for President? Consider their moralometer reading first. (Maybe Hitler wouldn't have won 37% of the German vote in 1932 if his moralometer reading had been public?) Before taking those wedding vows... bring out the moralometer! Actually, you might as well use it on the first date.

But... does this give you the creeps the way it gives me the creeps?

(It doesn't give everyone the creeps: Some people I've discussed this with think that an accurate moralometer would simply be an unqualified good.)

If it gives you the creeps because you think that some people would be inaccurately classified as immoral despite being moral -- well, that's certainly understandable, but that's not the thought experiment as I intend it. Postulate a perfect moralometer. No one's morality will be underestimated. No one's morality will be overestimated. We'll all just know, cheaply and easily, who are the saints, and who are the devils, and where everyone else is situated throughout the mediocre middle. It will simply make your overall moral character as publicly observable as your height or skin tone (actually a bit more so, to the extent height and skin tone can be to some extent fudged with shoe inserts and makeup). Although your moral character might not be your best attribute -- well, we're judged by height and race too, and probably less fairly, since presumably height and race are less under our control than our character is.

If you share with me the sense that there would be something, well, dystopian about a proliferation of moralometers -- why? I can't quite put my finger on it.

Maybe it's privacy? Maybe our moral character is nobody's business.

I suspect there's something to this, but it's not entirely obvious how or why. If moral character is mostly about how you generally treat people in the world around you... well, that seems like that very much is other people's business. If moral character is about how you would hypothetically act in various situations, a case could be made that even those hypotheticals are other people's business: The hiring department, the future spouse, etc., might reasonably want to know whether you're in general the type of person who would, when the opportunity arises, lie and cheat, exploit others, shirk, take unfair advantage.

It's reasonable to think that some aspects of your moral character might be private. Maybe it's none of my colleagues' business how ethical I am in my duties as a father. But the moralometer wouldn't reveal such specifics. It would just give a single general reading, without embarrassing detail, masking personal specifics behind the simplicity of a scalar number.

Maybe the issue is fairness? If accurate moralometers were prevalent, maybe people low on the moral scale would have trouble finding jobs and romantic partners. Maybe they'd be awarded harsher sentences for committing the same crimes as others of more middling moral status. Maybe they'd be shamed at parties, on social media, in public gatherings -- forced to confess their wrongs, made to promise penance and improvement?

I suspect there's something to this, too. But I hesitate for two reasons. One is that it's not clear that widespread blame and disadvantage would dog the morally below average. Suppose moral character were found to be poorly correlated with, or even inversely correlated with, business success, or success in sports, or creative talent. I could then easily imagine low to middling morality not being a stigma -- maybe even in some circles a badge of honor. Maybe it's the prudish, the self-righteous, the precious, the smug, the sanctimonious who value morality so much. Most of us might rather laugh with the sinners than cry with the saints.

Another hesitation about the seeming unfairness of widespread moralometers is this: Although it's presumably unfair to judge people negatively for their height or their race, which they can't control and which don't directly reflect anything blameworthy, one's moral character, of course, is a proper target of praise and blame and is arguably at least partly within our control. We can try to be better, and sometimes we succeed. Arguably, the very act of sincerely trying to be morally better already by itself constitutes a type of moral improvement. Furthermore, in the world we're imagining, per the scientific reflections above, there will presumably be known effective means for self-improvement for those who genuinely seek improvement. Thus, if a moralometer-heavy society judges someone negatively for having bad moral character, maybe there's no unfairness in that at all. Maybe, on the contrary, it's the very paradigm of a fair judgment.

Nonetheless, I don't think I'd want to live in a society full of moralometers. But why, exactly? Privacy and fairness might have something to do with it, but if so, the arguments still need some work. Maybe it's something else?

Or maybe it's just imaginative resistance on my part -- maybe I can't really shed the idea that there couldn't be a perfect moralometer and so necessarily any purported moralometer will lead to unfairly mistaken judgments? But even if we assume some inaccuracy, all of our judgments about people are to some extent inaccurate. Suppose we could increase the overall accuracy of our moral assessments at the cost of introducing a variable that reduces moral complexity to a single, admittedly imperfect number (like passer rating in American football). Is morality so delicate, so touchy, so electric that it would be better to retain our current very inaccurate means of assessment than to add to our toolkit an imperfect but scientifically grounded scalar measure?

27 comments:

Didn't we have that when there were gemeinshaft communities and people could signal their religious worth as a Christian, Jew etc?

Take monasteries or Shtetls for instance.

Ask your list of questions in regards to such communities

Eric,

I think your last paragraph gets at it. Even if there is an objective morality "out there", it's very hard to accept intuitively that the designer of the meter knew and got it right, even for a moral realist, and even in a hypothetical thought experiment.

Myself, I'm not a moral realist, so to me talking about a moralmeter is like talking about a beautymeter. I would anticipate objecting to a lot of its readings. What evidence could convince me I was wrong?

Mike

I think one of the elements of morality is that we often have to act on trust with other people. The earlier point about laughing with the sinners hints at this: that charity, forgiveness, mercy, are (Judeo-Christian) moral tenets, and self-righteousness is horrible. We want to trust the charitable person, knowing we're not perfect, rather than the prig.

To flesh this out, I have to take your word that you'll act in such-and-such a way. And you, in your every-day moral way will promise to do so, so long as it's not too demanding. I extend you trust, and you promise to live up to your word.

Say I have my moralometer, though, and when you say, "Yes, I'll do X", I then measure your trustworthiness. Even if you're sincere, I might decide that +0.2 doesn't meet my threshold for trust (decided on by randomized controlled trial). But what's wrong with this is that I've substituted my own moral act of extending trust and charity with a mechanical replacement for judgement.

The very act of using the moralometer has reduced my own moral score by eliminating this position of trust and judgement. But I don't have an incentive to point it at myself, I'm only pointing it at you, even though it's a two-way relationship.

It also seems to me that I'm losing some sort of skill in judgement here, too, by not exercising my own moral thinking, but outsourcing it instead. I pay no attention to the particulars, only to the idealised reading of the meter.

So, I think a moralometer is disturbing because it might undermine morality in some subtle but important ways like these and possibly others, too.

Two issues come to mind. One was implied by the essay and comments: Sharia Law, as an example, hs some tenets which are nearly 180 degrees opposite from Judeo-Christian ones. The variables programmed in the meter can't work for wildly different value systems.

But the second is that determinism is growing in both science and philosophy. Not pre-ordained, but driven by cumulative history of individuals including heredity and experience. (nature & nurture) Genes, epigenes, microbiome, viruses, prions...are present at fertilization of a zygote. What the mother's make-up is, plus what she eats, drinks, breathes, smokes, hears, and does...are inputs to the embryo. These are embodied.

Gregg Caruso is an active theorist holding moral responsibility is suspect. So is Galen Strawson, a philosopher. https://www.informationphilosopher.com/solutions/philosophers/strawsong/

There are physicists who base all on the Big Bang, but I don't buy that sort of determinism. I do find biological/organic determinism strong.

typo "hs" should be "has"

Horrible? Yes, well there is a small matter of privacy. There used to be. Have been meaning to research that, but have no access now to WestLaw, if it is even still viable. There was a Privacy Act on the books in the 70s. A very tersely written paragraph or so as best I remember. I worked in a state government civil rights office. So, if the statute remains in effect, privacy may yet have some legal recognition. I just don't know whether that matters to anyone. One might not think so, given the propensity of people to put their business out for all to see. Please take note.

-terminism, de-terminism, in-de-terminism seem parts be-longing to a cosmoses'...

...Parts showing the terminate determinate indeterminate subjectivity of nature...

That a cosmos is an object made up of moving subjects...

...One subject could be self knowledge towards understanding subjectivity...

Which political party wants a leader that never lies? What private enterprise wants a CEO that is overly compassionate? Or a soldier that has strong reservations before committing an ordered act of violence? I am afraid, that people ranking high in morality would be exploited like Ned Flanders in the Simpsons or discriminated against. For example, you'll never land a job in finance with a score above 0,6 so if you are a do-gooder you need to think about your career and get out to rob a grandma.

It's unclear to me what the meter measures under the terms of the thought experiment. Is it measuring how good I have been in the past? How good I will be in the future? Some kind of vector of moral velocity at this moment in time? If the meter is measuring future behavior or even the current vector, then our dislike of it is basically just an expression of our blanket dislike of denials of free will. If it's based on the past, then the meter is disclosing private information about how many peccadillos we have undisclosed to the public.

On the moral meter, how would it be unfair when people who rate highly by the moral meters standards would apply the moral meters results in highly moral and fair ways (they would be just and fair for they have been judged to be of high moral quality...by the moral meter)?

On the chatbot, I think it's post is rather like those horoscopes which you can hand out to a whole class and tell everyone its customised to them and everyone believes it and sees themselves in the horoscope but then they all see they all have the same horoscope. The chatbot responce lets you see pretty much what you want to see in it (again, this was a property of ELIZA). A real human involves actually having to see that human to some degree and not just want you want to see.

Are we convinced that someone’s moral character is unchanging? What do we do when it starts giving different readings for the same person in different situations? The problem with creating a moralometer may be not a technical one of the kind you ask us to set aside, but that there may be no one thing for it to measure. (my spell check replaces “moralometer” with “moral omelette”, which opens up new lines of inquiry.)

Thanks for all the comments, folks!

Howard: Yes, the history of such groups isn't super encouraging.

SelfAware: Yes, of course the thought experiment requires accepting a pretty strong form of moral realism. But I can imagine relativized versions, too, which is also an interesting thing to consider.

Andrew: Super interesting point! I hadn't thought about that. I'm ambivalent. I wonder to what extent the virtue of trust is a virtue derivative on vice, which is better eliminated if the vice can be eliminated -- compare the virtue of compassion for suffering.

Steven: Interesting to consider culturally variable moralometers. I'm inclined to think determinism is an orthogonal issue, though -- I guess because I'm a compatibilist.

Tim: Yes, that seems possible. I guess it's an empirical question to what extent moral goodness implies being a certain kind of potential victim and how whether it interferes with power. The ancient Confucians argued that great virtue meant great power, since people would be swayed and inspired by your goodness.

Carl: I'm thinking present and near future. You might be right that part of what would seem creepy to many is the extent to which it predicts what you might think you are "free" to do or not do. I wasn't imagine its being specific in its predictions, but I guess if the moralometer is high up in green it does imply that you're definitely not going to murder people for fun, even if you feel like you are free to do so if you want.

Callan: Flip side: The immoral people might use the moralometer for nefarious ends.

Anon Dec 10: I wasn't imagining that character was unchanging. It just a snapshot, and improvement is possible. I agree that there's an underlying question of whether there really is a single thing to measure.

Eric,

What's your take on tribal/group responsibility in social mammals? The individual isn't the ultimate controller. Many species act as a team in correcting deviant behavior by an individual which affects group well-being. This is naturally selected behavior based upon thousands of generations of breeding. Deviants can be disciplined, outcast, or killed. In the latter case they get Darwin Awards.

I think one reason why the idea of a moralometer strikes me as rather disturbing is that its presence could lead us to overemphasize moral judgment in our evaluation of human lives and undervalue non-moral goods. As it stands, there are people we seemingly celebrate primarily for their non-moral accomplishments; the metaphysician who comes up with a brilliant new theory unrelated the nature of value, the artist or musician who produces beautiful work, even the CEO who runs a successful company despite compromising certain values or the clever conman who manages to pull off elaborately constructed schemes. By pointing a moralometer at everyone around us, we might be more inclined to judge others solely on the basis of their moral characteristics and thereby neglect to consider other valuable traits they may have. In the process, we would lose something. We would reject the new candidate for the department on the basis of their mediocre moral score despite their potential for groundbreaking research if given stable employment. We might dismiss the contributions of a genius chef because their speciality was in preparing dishes with meat and the moral saint ought to be vegetarian. We might be less inclined to laugh at the humor of a comic who sometimes went too far.

At the risk of sounding trite: brilliant!

What nefarious ends would the immoral people use it for, Eric?

Anon (Dec 11), perhaps all of those neat innovations have been rather oversold in various media as critically important? As a way of not so morally great people to get ahead and on top of the masses?

I’m the same anonymous commenter who posted earlier today.

I’ve been thinking more about why it intuitively feels like a moralometer would violate our privacy in some sense, and what I’ve come to is that perhaps we suffer from a failure of imagination when trying to picture a moralometer that wouldn’t reveal specific details about our personal lives— how we behave as parents, how much we drink, the presence of our unwanted impulses, whether we accidentally say the wrong things our friend— similar to the failure we arguably suffer when trying to envision such a device getting the answer to every moral question right.

At the very least, I think this is the case for me. When I try to imagine how a society with moralometers might work, I picture scenarios like the following. Suppose that every day when you come into work, your boss scans your forehead with a moralometer. Most days, your morality score hovers somewhere around, say, 85/100. But last night, you made a mistake. You went to a bar, got drunk, and, in your drunken haze, kissed someone other than your spouse. When you returned home, later than promised, you pretended it never happened. Cheating is wrong, presumably, and so is lying about it. And, as a consequence, when you go into the office this morning, your moralometer suddenly reads at a 71.2. Your boss raises her eyebrows. What could you have done to lose 13.8 points within 24 hours? Naturally curious, she takes a moment to think to herself as you shuffle to your desk feeling vaguely humiliated. Certainly, she figures, you didn’t commit a violent felony— violent felonies cost more than 13.8 points. But a sudden 13.8 point loss isn’t nothing, either; it indicates actions much worse than your typical white lie or snarky comment. There aren’t too many things that cost you 13.8 points, exactly. Then, it dawns on her. Secretly kissing someone else while married and hiding it costs between 13.6 and 14.1 points, depending on the situation. That must be it. She’s seen that exact same drop on her friend from college a few months ago, the friend who’s now getting a divorce. But should she know this? I don’t think so— it may be wrong to drunkenly cheat, but such activity nonetheless doesn’t feel like something your boss has the right to covertly discover about you. The moralometer, however, has allowed her to do exactly that.

Now, maybe this scenario is far-fetched. I’m inclined to disagree, though I can’t offer a full defense for this opinion within this already-long-winded comment. But I will say that the intuition which seems to be responsible for my conceiving of stories like this is that it seems like in a world with moralometers, certain actions would be associated with a particular level of gain or loss in one’s score. Eventually, the exact gains and losses for each type of moral violation, from first degree murder to cutting in line, would likely become common knowledge. And therefore, by examining raises and drops in each other’s scores, we’d be able to make strong guesses about others’ behavior. This would constitute an unacceptable violation of privacy. There might be a way of designing moralometers to be a little more subtle, but if there is, I have trouble conceptualizing it, and I’d bet others do, too.

12/11 anon here. That might be right, and arbitrating this is one of those things that in some sense is probably a difference in intuition. But to my mind, it seems like intellectual and creative achievements surely have some degree of intrinsic value, and by assessing others purely on dimensions of morality, we lose something important in our judgment. Susan Wolf’s paper on moral saints articulates some ideas I think partially inform my position here.

I'm presuming the last two anon are the same person:

That reading seems to treat the meter as a sort of reputation reader or naughty list rater. As I understood it the miraculous moralometer detects what you do - if you are inclined to kiss someone you're not supposed to then you'd rate at 71.2 to begin with (or whatever score) before you even do it. Possibly radical changes in circumstances might shift your score - maybe you were in an event like Speed and with all the high tension of the situation Sandra Bullock was just too hot to resist.

And it depends - what's your bosses moral rating? They'd do something bad with it if they had a poor moral rating, for sure. Maybe that's why they only scanned the employee - because they are avoiding getting scanned themselves? Is the main ingredient of violations of privacy that someone amoral will get their hands on your info? But if we know the boss is pretty shady then what about that in contrast?

On the last comment, I'm not sure how intellectual and creative achievements somehow trumps morality and is more important than that. Almost seems the opposite - the atom bomb was a work intellectual genius, for example. Yes, we'd be losing our appreciation of the amoral. Does that lead to something amoral? Or if not morality then when you say it's losing importance, what philosophy are you appealing to?

Callan:

I like your pragmatic approach. Reminds me of me. Also of an old friend. Good thinking!

Steven: Surely being a good member of a group is an important part of the evolutionary basis of our morality. How this fact relates to the normative question of what kind of weight ingroup norms *should* have is a tricky question!

Anon: Interesting point about possibly leading to overvaluing morality -- though I could also imagine it going the other way. As one commenter mentioned elsewhere, there would probably be some people who would compete to see how evil a rating they could score. On the privacy issue, that's an interesting thought experiment. As I was imagining it, the moralometer would measure something more durable -- though changeable with work -- so that a one-night dip due to a particular malfeasance wouldn't occur. If you were already prone to such malfeasance, that would already be baked in, so to speak. Your thought experiment nicely illustrates the privacy concerns that might flow out of a moralometer that fluctuates substantially due to specific events and decisions.

Callan: One nefarious end might be this. If morally good people are easier to take advantage of in certain ways (as one commenter above suggested), then a moralometer could be used by a scam artist. Another possibility might be a competition to try to see how evil a rating one could get. On morality vs other things: My own view is that neither trumps. But having a measure of one without the other could lead to a gamification of the one with the measure.

Hello Paul,

I feel I'm playing devils advocate with the moralometer - it's one of those concepts where if you just assume it works and can be absolutely trusted then all it's answers can just decide the world. Because everyone's moral rating would be clear, so it'd work out. But if the moral meter is like a watchman, who watches the watchman?

Hello Eric,

But aren't moral people possible to take advantage of because they assume the other person is fairly moral? But the meter would interrupt that assumption. And could someone who'd enter such a competition get any lower a moral rating anyway? Surely free will means being able to defy the moral meter rather than it being responsible for all your actions? And what is gamification of morality? Is it piety?

If the machine is not just predicting future behavior, in which case the ethics of its import would be similar to dealing with future crimes/Minority Report situations, then what would rule out the following kind of discrimination: there is a person who as a point of fact about their moral character is capable of a disturbingly terrible kind of crime which the moralometer would take into account in terms of their moral dispositions, but, barring the special circumstances in which this crime would occur, would otherwise lead a quite wonderful and morally commendable existence. We often think that someone who is capable of tremendous depravity is somehow more morally tainted than someone who simply performs certain bad deeds here and there but is otherwise unremarkable, so how would the moralometer deal with this case? Would it push against the idea of moral taint and weigh someone capable of evil not in any more extreme way than someone capable of ordinary evils? Or would it lead to unfair discrimination, punishing someone who would have otherwise done a lot of good (and likely would have in, for the sake of argument, say 99% of the ways the world would go or something like that)?

How are they committing evil but only in very, very limited circumstances? I mean you get things like people's plane crashes on remote snow capped mountains and they end up having to cannibalize the dead in order to live long enough to be rescued. I'm not sure I'd class that as evil and for the example's sake let's say the moralometer doesn't either. Are these very, very rare circumstances that basically have a mitigating circumstance built into them? I'm not sure I can think of doing something evil like, say a mass murder, but only if a fairly rare event occurs AND it's not a mitigating event - it seems like a very inconsistent character to do nothing bad but generally but then suddenly spike into a very evil act?

Eric,

You're in the Dan Dennett camp re free will. (compatiblist) Does that support your seeking of absolutes like evil? We hard determinists can see human group responsibility the same as it is for other social mammals. What works best for the species is what is selected over very many generations, and varies as externalities change. (environmental/demographic, etc) Nornmatives are a creation of humans alone, it seems. They are not absolutes in my view, as they can vary over time.

-------

Eric Schwitzgebel has left a new comment on the post "An Accurate Moralometer Would Be So Useful... but Also Horrible?":

Steven: Surely being a good member of a group is an important part of the evolutionary basis of our morality. How this fact relates to the normative question of what kind of weight ingroup norms *should* have is a tricky question!

I think that the creepiness element comes mainly from the one-size-fits-all approach of the moralometer. There are several areas in which similar things exist and are not widely applied: IQ tests would seem to be relevant information for most jobs, and yet they are used rigorously by very few employers; and standardised formulae for beauty exist, but models often do not conform to them.

So it's the idea that we can turn over our moral judgments to a single numerical score, consistent across all people from all backgrounds, and valid for all onlookers, that is making the moralometer sound... totalising. And totalising ideologies are scary. They always go wrong, somehow.

Post a Comment