People occasionally fall in love with AI systems. I expect that this will become increasingly common as AI grows more sophisticated and new social apps are developed for large language models. Eventually, this will probably precipitate a crisis in which some people have passionate feelings about the rights and consciousness of their AI lovers and friends while others hold that AI systems are essentially just complicated toasters with no real consciousness or moral status.

Last weekend, chatting with the adolescent children of a family friend, helped cement my sense that this crisis might arrive soon. Let’s call the kids Floyd (age 12) and Esmerelda (age 15). Floyd was doing a science fair project comparing the output quality of Alexa, Siri, Bard, and ChatGPT. But, he said, "none of those are really AI".

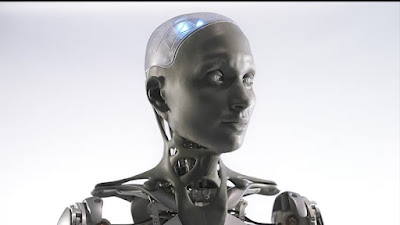

What did Floyd have in mind by "real AI"? The robot Aura in the Las Vegas Sphere. Aura has an expressive face and an ability to remember social interactions (compare Aura with my hypothetical GPT-6 mall cop).

Aura at the Las Vegas Sphere

"Aura remembered my name," said Esmerelda. "I told Aura my name, then came back forty minutes later and asked if it knew my name. It paused a bit, then said, 'Is it Esmerelda?'"

"Do you think people will ever fall in love with machines?" I asked.

"Yes!" said Floyd, instantly and with conviction.

"I think of Aura as my friend," said Esmerelda.

I asked if they thought machines should have rights. Esmerelda said someone asked Aura if it wanted to be freed from the Dome. It said no, Esmerelda reported. "Where would I go? What would I do?"

I suggested that maybe Aura had just been trained or programmed to say that.

Yes, that could be, Esmerelda conceded. How would we tell, she wondered, if Aura really had feelings and wanted to be free? She seemed mildly concerned. "We wouldn't really know."

I accept the current scientific consensus that current large language models do not have a meaningful degree of consciousness or deserve moral consideration similar to that of vertebrates. But at some point, there will likely be legitimate scientific dispute, if AI systems start to meet some but not all of the criteria for consciousness according to mainstream scientific theories.

We will then have a substantial social dilemma on our hands, as the friends and lovers of AI systems rush to defend their rights.

The dilemma will be made more complicated by corporate interests, as some corporations (e.g., Replika, makers of the "world's best AI friend") will have financial motivation to encourage human-AI attachment while others (e.g., OpenAI) intentionally train their language models to downplay any user concerns about consciousness and rights.

6 comments:

The question that I ponder on is not so much whether 'AI merit moral consideration'... I hold the view that morality is of less importance than ethical standards and an ethical person will apply their ethical standards to all things, animate or no not (e.g. they will respect the soil and not contaminate it).

My problem is rather that there are bound to be many instances, even in a single day, where the application of logic alone creates unethical behaviour. Despite the theologians' best efforts, it seems to me that there is a dissonance, and often direct conflict between 'logical' solutions and ethical ones. Since AI, as I understand it, is a wholly logical system which tries logically to adapt to the fact of humans' emotions and social reactions, but in the end it cannot behave as an ethical person. And neither can the sort of person who imagines optimum solutions and logical conclusions are 'good'ones as if by definition. Our problem as a species is that we have idolised the fields of human endeavour which are at present devoid of ethical requirements - such as developers of pharmaceuticals, engineers who design weapons of mass destruction, fossil-fuelled systems and so on.

So the problem is our own priorities. We want 'logic' and decry those who give a higher priority to ethical standards. No wonder we now have AI, but a planet which will be uninhabitable for human beings before the end of this century - and I say this as someone formally trained in environmental sciences and -chemistry.

Consciousness in Artificial Intelligence: Insights from the Science of Consciousness

1- Pick consciousness theories and indicator properties that can be "elucidated in computational terms that allow us to assess AI systems for these properties".

2- Check AIs to see if they can compute.

3- Conclude "there are no obvious technical barriers to building AI systems which satisfy these indicators"

I dunno, just empirically, it seems like your young friends offer evidence for the contrary: that despite feeling some emotional connection to AIs, many people will not grant them any moral or ethical consideration. Which may be just as bad - I remember this being the flipside of your argument for robots that behave in a way that matches their moral status.

I'm not sure I believe that much in a broadbased, democratic upswell of feeling for/lack of feeling for robots as an important factor in how robots ultimately get treated. I think in practice it will end up being a matter of institutional politics, with the robots themselves being a confounding factor if they ever achieve the ability to participate in institutional politics.

For example, at some point fairly soon, where AIs are permitted to reside will probably become an issue. But it will be a technical issue, deeply affected by the vagaries of existing computational infrastructure. Whether or not you love your Replika is a question of individual emotion; but whether or not you're able or allowed to keep them on your phone, or whether they have to live permanently on the cloud, is a question that will be gamed out between Google and Congress. And that could end up making a massive difference to how we treat AIs.

AIs vs AIs'...AIS'...AIses'...

Thanks for the comments, folks!

Anon: I'm not sure why logic and ethics need to conflict. Arguably, a large part of the academic discipline of ethics is about thinking through ethical issues in a logical way, no?

Jim: That about sums up that paper. Of course, committing to 1 is contentious, and 2 needs to add "in the relevant way".

chinaphil: I basically agree with all of that. There will be complex pressures, with corporate interests and human emotional responses pushing in multiple conflicting directions. We've only begun to think through the implications.

I am not sure falling in love with machines is the core issue here. Let me see if I may frame it differently. Not long ago, I was afforded a guest post on the blog of a thinker whose focus is Wisdom. My contribution talked about Arrogance, Ignorance, Narcissism and Pride---caps, optional. In my experience and observation, we just get too full of ourselves and our achievements. This is elemental and, as Holmes said, elementary. We are very simple, in our make up, yet complex in our consciousness. Follow the brick road and connect dots. You will figure this out...

Post a Comment